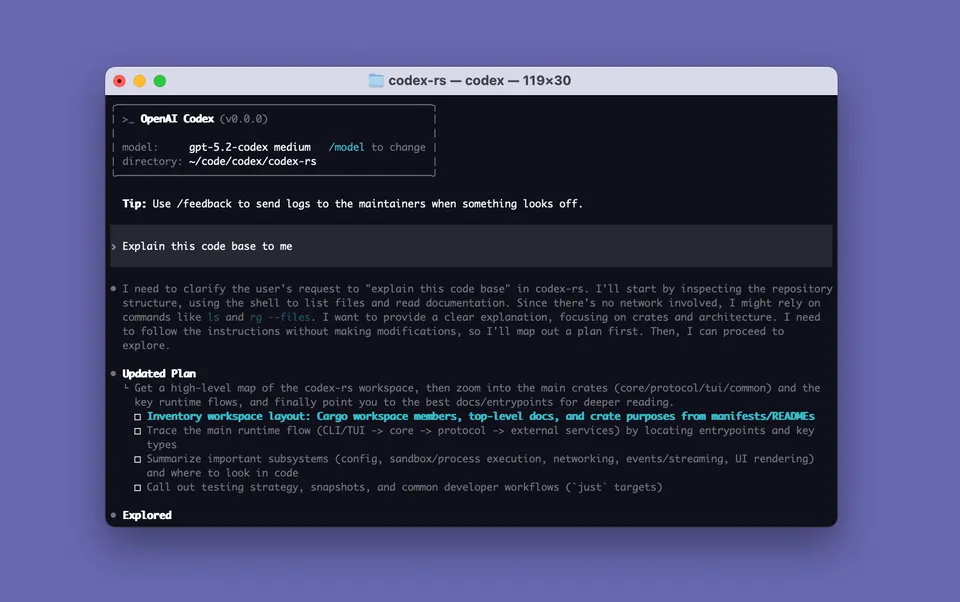

Image via github.com/openai/codex

Image via github.com/openai/codex Codex Hits 3 Million Weekly Users. OpenAI Responds With a $100 Plan to Match Claude Max.

Sam Altman celebrated Codex's growth milestone by resetting usage limits and launching a new $100 ChatGPT Pro tier built around Codex access — a direct shot at Anthropic's Claude Max.

On April 8, 2026, Codex crossed three million weekly users. The day after, OpenAI launched a new $100 ChatGPT subscription tier built around giving those users more access. The two announcements together make the competitive intent about as subtle as a billboard.

Thibault Sottiaux, who leads Codex at OpenAI, put the growth in plain terms: “Three million people are now using Codex weekly, up from two million a little under a month ago.” That’s a million new weekly users in roughly four weeks. The three-month growth rate is 5x, with month-over-month usage up 70%.

Sam Altman posted on X to mark the occasion:

“To celebrate 3 million weekly codex users, we are resetting usage limits. We will do this every million users up to 10 million. Happy building!”

The promise is worth paying attention to. Every time Codex adds a million weekly users up to 10 million, OpenAI will reset usage limits across all plans. That’s a concrete commitment to unlock capacity as demand scales, rather than rationing access when the tool gets popular.

The New $100 Plan

The next day, OpenAI added a new pricing tier that had been conspicuously missing from their lineup. A $100/month ChatGPT Pro plan now sits between the existing $20 Plus plan and the $200 Pro plan, filling a gap that Anthropic’s Claude Max had occupied unchallenged at that price point.

Altman announced it with the same brevity: “It is very nice to see Codex getting so much love. We are launching a $100 ChatGPT Pro tier by very popular demand.”

The structure of the new plan:

- 5x Codex access compared to Plus

- Same model suite as the $200 Pro plan: GPT-5.4 Pro (exclusive), plus unlimited GPT-5.4 Instant and GPT-5.4 Thinking

- Launch promotion: 10x Codex usage compared to Plus through May 31, 2026

After May 31, the standard 5x multiplier applies. The $200 Pro plan remains at 20x Codex access relative to Plus, so the plans are differentiated primarily by usage ceiling rather than model access.

How Codex Limits Actually Work

The exact limits depend on the model and task type, and they’re measured across rolling five-hour and weekly windows rather than strict daily caps. On Plus:

- GPT-5.4-mini: 60-350 local messages per five hours

- GPT-5.3-Codex: 10-60 cloud tasks per five hours; 10-25 code reviews per week

On the new $100 Pro plan (during the 10x promo period):

- GPT-5.3-Codex: 300-1,500 local messages and 100-600 cloud tasks per five hours

- Code reviews: 200-500 per five hours

These are ranges because limits adjust dynamically based on system load. The actual throughput any individual sees will vary, but the 10x floor during the promo period is substantial for developers running Codex as a daily driver.

Why This Plan Exists

ChatGPT’s plan structure had an awkward gap. The $20 Plus plan was sufficient for casual users. The $200 Pro plan was genuinely powerful but expensive for individual developers. There was nothing in the middle for the growing cohort of developers who had made Codex a primary tool and wanted more headroom without paying $200.

That gap also happened to be where Anthropic’s Claude Max lives, at $100/month. Claude Code is the tool Codex competes with most directly. The pricing match is not coincidental.

The full ChatGPT plan lineup now looks like this:

| Plan | Price | Codex vs Plus |

|---|---|---|

| Free | $0 (ad-supported) | baseline |

| Go | $8/month | — |

| Plus | $20/month | 1x |

| Pro | $100/month | 5x (10x through May 31) |

| Pro | $200/month | 20x |

| Business | $25/user/month | — |

| Enterprise | custom | custom |

The $100 Pro and $200 Pro plans share the same model access. The only meaningful difference is how much you can do in a given window.

What Developers Are Getting More Of

Codex has been building out its surface area aggressively. The core product is a cloud-based AI software engineering agent that runs tasks asynchronously, so you can kick off multiple parallel sessions while you do other work. It’s not just autocomplete or inline suggestions — it can take a task description, clone a repo, write code, run tests, and return a pull request.

With GPT-5.4 as the backend model, Codex sessions now have access to a 1M token context window (experimental, enabled via config). The model handles complex, multi-file refactors, library migrations, and debugging chains that would have required substantial hand-holding six months ago.

The limits weren’t theoretical before the 3M milestone — heavy Codex users were genuinely hitting them. The reset, and the new plan tier, are OpenAI acknowledging that usage outgrew the original assumptions.

Sources

- OpenAI Codex celebrates 3 million weekly users, CEO Sam Altman resets usage limits — BusinessToday

- OpenAI’s new $100 ChatGPT Pro plan targets Claude Max with five times the Codex access — The Next Web

- ChatGPT finally offers $100/month Pro plan — TechCrunch

- OpenAI introduces ChatGPT Pro $100 tier with 5X usage limits for Codex — VentureBeat

- Codex rate card — OpenAI Help Center

- Sam Altman on X