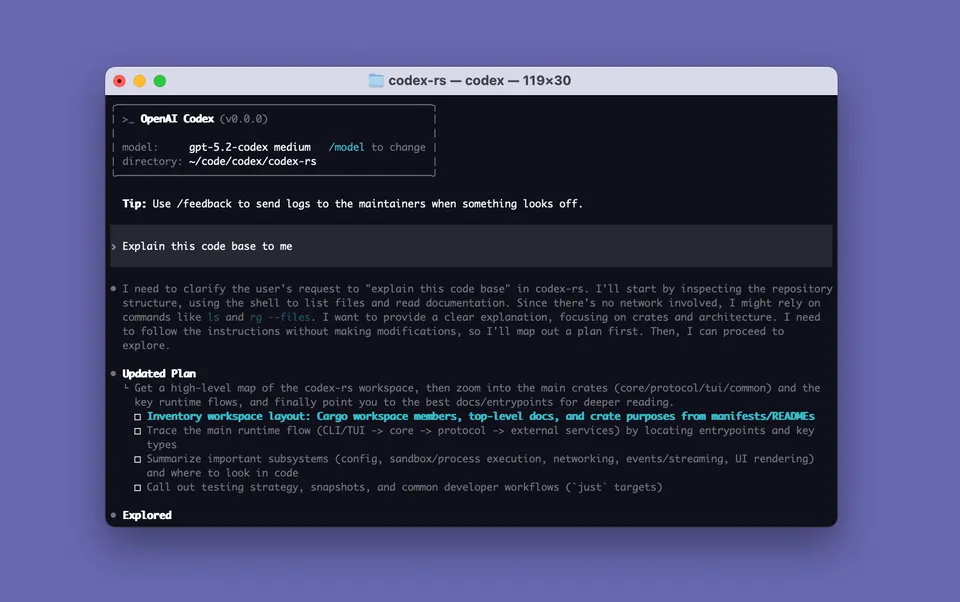

Splash screen from openai/codex on GitHub

Splash screen from openai/codex on GitHub OpenAI Launched a $100 ChatGPT Plan and Named Anthropic as the Target

OpenAI's new $100/month ChatGPT Pro plan goes after Claude Max directly: same price, five times more Codex than Plus, and access to the same models as the $200 tier. Here's what you get and why the timing matters.

OpenAI added a new $100 per month ChatGPT plan on April 9, 2026, and positioned it directly against Anthropic. The new tier goes by the same name as the $200 tier, both are called “Pro,” which creates some confusion. The differentiator is the price and the usage ceiling: $100 gets you five times the Codex usage of Plus, while $200 gets the full cap. The model access is identical between both Pro tiers.

The competitive target is obvious. Anthropic’s Claude Max costs $100 per month. Until this week, there was nothing in OpenAI’s lineup that matched that price point while offering serious agentic coding capacity.

The Pricing Structure

Before this launch, OpenAI’s consumer plans were:

| Plan | Price | Notes |

|---|---|---|

| Plus | $20/month | Base access, limited Codex |

| Pro | $200/month | Unlimited-ish Codex, all models |

After:

| Plan | Price | Notes |

|---|---|---|

| Plus | $20/month | Base access, limited Codex |

| Pro ($100) | $100/month | 5x Codex vs Plus, all Pro models |

| Pro ($200) | $200/month | Full Codex cap, all Pro models |

The $100 plan launched with a promotion: through May 31, 2026, subscribers get 10x the Codex usage of Plus rather than 5x. After that date, it drops to the standard 5x.

The Codex rate card for the $100 Pro plan (with the 2x launch boost active) looks like this:

- GPT-5.3-Codex: 600-3,000 local messages and 200-1,200 cloud tasks per 5-hour window

- GPT-5.4: 400-2,000 local messages per 5-hour window

- Code Reviews: 400-1,000 per 5-hour window

These numbers include the temporary doubling, so halve them after June 1 when the promotion ends.

What’s Included

Both Pro tiers now include access to the same model suite:

- GPT-5.4 and GPT-5.4 Instant (Thinking)

- GPT-5.4 Pro (the exclusive tier variant)

- GPT-5.3-Codex for coding tasks

- GPT-5.3-Codex-Spark (research preview, with separate usage limits, only for Pro subscribers)

- GPT-5.3 Instant Mini as the new fallback model when rate limits are hit

GPT-5.3 Instant Mini replaced GPT-5 Instant Mini as the rate-limit fallback. OpenAI describes it as more natural in conversation and with stronger contextual awareness, though it doesn’t appear in the model picker since it only activates when you’re over your primary limit.

The Codex Context

OpenAI’s timing tracks with Codex’s growth. On April 8, the day before the plan launched, the company announced that Codex had crossed three million weekly users. That’s described as a 5x increase over three months.

Three million weekly users for a coding agent is a real number. Cursor has over one million daily active users. Claude Code hasn’t published a weekly user count. The weekly vs. daily framing makes direct comparison difficult, but the trajectory matters: Codex is growing fast, and OpenAI is trying to capture higher-value segments of that growth with a mid-tier plan.

The $100 plan is positioned specifically for “longer, high-effort Codex sessions” in OpenAI’s framing. That’s the use case where Claude Code’s Max subscription has been the go-to: developers running extended agent sessions, reviewing large diffs, and pushing through multi-file refactors.

Old Models Going Away

On the same day the new plan launched (April 14), OpenAI removed several older Codex model options from the model picker. The models that no longer appear:

- gpt-5.2-codex

- gpt-5.1-codex-mini

- gpt-5.1-codex-max

- gpt-5.1-codex

- gpt-5.1

- gpt-5

The underlying API access may persist for a grace period, but new sessions will default to the current generation (GPT-5.3-Codex and GPT-5.4) rather than the older versions.

How This Compares to Claude Max

At $100 per month, here’s how the two plans actually compare for coding work:

Claude Max ($100/month):

- Claude Code with extended usage (15x more than Pro’s 5-hour session cap)

- 1 million token context window in Claude Code

- All Claude models: Opus 4.6, Sonnet 4.6, Haiku 4.5

- Access to Claude Cowork

- Claude Code routines (new research preview, up to 15 cloud runs/day)

OpenAI Pro ($100/month):

- GPT-5.3-Codex in Codex app, with 5x Plus usage (10x through May 31)

- GPT-5.4 and all current OpenAI models

- GPT-5.3-Codex-Spark in research preview

- Cloud task execution in Codex’s sandboxed VM environment

The meaningful differences: Claude Code has a deeper terminal integration and a larger context window at this tier. Codex has cloud sandboxing and supports task parallelism through the web interface with GPT-5.3-Codex-Spark. For vibecoding and frontend prototyping, Codex’s browser preview has been a standout. For deep multi-file refactors from the terminal, Claude Code’s context and integration tend to win.

Neither plan is clearly dominant for all workflows. The choice has been a regular topic in developer forums since the plans landed at the same price point.

What Developers Are Saying

The Hacker News thread that followed the announcement showed the familiar split: developers who had been on the $200 Pro plan and saw the $100 option as a downgrade, versus developers who had been on Plus and saw it as a meaningful step up. The $200 tier holders pointed out that the usage limits drop significantly at $100. The Plus upgraders noted that 5-10x more Codex is still a lot more Codex.

A recurring comment: the 5-hour window resets are more punishing than monthly caps. Running a long autonomous Codex session can drain a 5-hour window quickly, and then you’re waiting.

OpenAI’s approach here is a direct response to Claude Max gaining ground. The $100 gap in their lineup was visible and Anthropic had it filled. Now OpenAI does too.

Sources:

- OpenAI community: Introducing New $100/month Pro Tier

- Codex pricing (OpenAI Developers)

- Codex rate card (OpenAI Help Center)

- About ChatGPT Pro plans (OpenAI Help Center)

- TechCrunch: ChatGPT finally offers $100/month Pro plan

- The Next Web: OpenAI’s new $100 ChatGPT Pro plan targets Claude Max

- 9to5Mac: OpenAI introduces $100/month Pro plan aimed at Codex users

- VentureBeat: OpenAI introduces ChatGPT Pro $100 tier with 5X usage limits for Codex