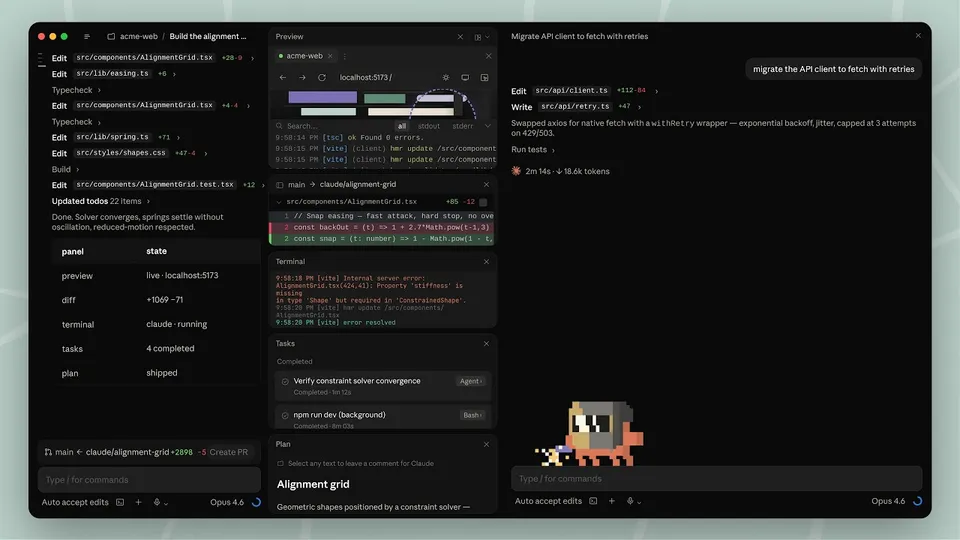

Screenshot from Anthropic's announcement of Claude Code desktop redesign

Screenshot from Anthropic's announcement of Claude Code desktop redesign Anthropic's Worst Week Before an IPO: Outage, Backlash, and a Fortune Cover Story

On April 13, Claude went down. On April 14, Fortune and VentureBeat called it a trust crisis. Here's what actually happened, what Anthropic admitted, and what developers can do right now.

Anthropic has had a rough two days.

On April 13, hundreds of users hit 500 errors across claude.ai, the API, and Claude Code for several hours. The next day, Fortune published a deep piece on the growing user backlash over Claude’s performance decline, framing it as a potential threat to the company’s October IPO. VentureBeat ran its own version of the same story under the headline “Is Anthropic ‘Nerfing’ Claude?” The Register had already run a data-backed analysis April 13 noting that quality complaints in Claude’s GitHub issue tracker were on pace for more than 20 issues in April’s first 13 days, a 3.5x increase over the January-February baseline.

This is a continuation of the story we covered April 11 with the AMD director’s performance data. But the story has now escalated into something different: a business-level problem, not just a developer complaint.

What Happened on April 13

Multiple users across Europe and North America reported intermittent HTTP 500 errors starting in the early afternoon UTC. The outage hit claude.ai, the Claude API, and Claude Code. Anthropic’s status page eventually acknowledged “degraded performance” before the incident closed several hours later.

The timing was bad. The GitHub issue filed by AMD Director Stella Laurenzo on April 2 had already accumulated over 2,200 reactions and 293 comments. The April 13 outage pushed the frustration higher. Developers already questioning whether Claude had been degraded now had a service reliability complaint layered on top of a performance complaint. According to the Hacker News thread “Claude loses its >99% uptime in Q1 2026,” this is one of several incidents in a period where Anthropic’s reliability has visibly slipped.

What Fortune and VentureBeat Are Reporting

Both outlets published pieces on April 14 drawing on months of complaint data, Boris Cherny’s public statements, and anonymous sources inside and close to Anthropic.

The core claims:

The effort-level change happened on March 3. Anthropic switched the default effort setting for Claude Opus 4.6 from “high” to “medium” that day, described internally as effort level 85 out of 100. Boris Cherny, head of Claude Code, said publicly that this was done in response to user feedback that Claude was consuming too many tokens per task. Many users say they were never told.

Compute pressure is real. Multiple outlets are reporting speculation, backed by some sourcing, that Anthropic may be under more GPU and data center pressure than its public communications suggest. Anthropic has signed fewer large-scale data center agreements than OpenAI or Google. The company has declined to comment on the specifics.

The IPO is on the line. Anthropic is reportedly targeting an IPO as early as October 2026 at a $380 billion valuation. Fortune’s framing is explicit: user dissatisfaction is “particularly threatening” to Anthropic because the company has built its brand specifically around being more transparent and more aligned with its users’ interests than its competitors. A pattern of silent configuration downgrades undercuts that positioning.

The Three Incidents in Seven Days Pattern

Some analysts and observers have pointed to a clustering of incidents in late March and early April that looks worse in aggregate than any individual event would:

- March 26: Fortune reported that Anthropic accidentally exposed draft materials for an unreleased model called Mythos via a CMS misconfiguration in a cloud storage asset. We covered this when it broke.

- March 31: A source map file shipped inside an npm package exposed 512,000 lines of Claude Code’s TypeScript source. We covered this in detail here.

- April 2 through April 14: A GitHub issue filed by an AMD director lit up the developer community, followed by outages, followed by two major news pieces calling the situation a trust crisis.

That each of these incidents had a benign or at least non-malicious explanation does not change how they read to developers and potential IPO investors watching closely.

There is also a new Bloomberg report published April 14: the US Treasury is seeking access to Anthropic’s Mythos model to evaluate its cybersecurity risks. That adds a government relations dimension to an already complicated week.

What Anthropic Actually Admitted

Boris Cherny confirmed, in public comments referenced by Fortune and VentureBeat:

-

The adaptive thinking feature launched February 9, letting the model decide how much reasoning to apply to a task rather than using a fixed budget. This changed how thinking depth manifested for complex tasks.

-

The default effort level was changed from high to medium on March 3. This was not disclosed in a changelog.

-

As a response to the backlash, Anthropic changed the default effort level back to high for API-key users, Bedrock, Vertex, Foundry, Team, and Enterprise users on April 7.

-

Cherny said the company will test “defaulting Teams and Enterprise users to high effort, to benefit from extended thinking even if it comes at the cost of additional tokens and latency.”

The company’s position is that this was a product configuration change, not a model downgrade. The model itself was not changed. Users who experienced degradation were experiencing the effects of lower default effort, not a weaker model. That is technically accurate, but for the power users who noticed, the distinction does not help much.

What Benchmark Data Shows

The Register’s analysis noted that Claude GitHub issue tracker data shows a sharp increase in quality complaints. BridgeMind published claims that Claude Opus 4.6 fell from 83.3% accuracy to 68.3% on the BridgeBench hallucination benchmark, drawing headlines about a “67% drop.” Multiple AI researchers have since flagged the BridgeBench methodology as flawed and the claim as misleading. Margin Lab’s SWE-Bench Pro data shows no substantive change in Claude’s performance on that benchmark since February.

The honest read: benchmark performance did not clearly degrade. Default configuration did change. Power users who relied on Claude doing more reasoning per task by default noticed a real behavioral shift, even if the model’s underlying capabilities were unchanged.

What Developers Can Do Right Now

For users on Max, API, Bedrock, or Vertex plans, the effort level was reset to high on April 7 according to Claude Code’s changelog. If you are a Pro or standard user, the default remains medium.

To override this yourself, add the following to your CLAUDE.md file in any project:

effort:highOr set the environment variable before running Claude Code:

export CLAUDE_EFFORT=highYou can also pass it directly per-prompt in the Claude Code interface using /effort high.

The Broader Stakes

The performance controversy matters more for Anthropic than it would for most companies because the brand is built on trust and honesty. Anthropic has spent years positioning itself as the AI lab most willing to acknowledge limitations, publish safety research, and communicate transparently with developers. A sequence of undisclosed configuration changes, compounded by weeks of silence while developer complaints accumulated, is not consistent with that positioning.

For developers who bet their workflows on Claude Code, the practical lesson is straightforward: pin your effort level explicitly in CLAUDE.md rather than relying on the platform default. That setting should not change unless you change it.

Related coverage: The AMD director who measured Claude’s quality collapse | Claude Code’s source code leak via npm | The Mythos leak and what it revealed

Sources: Fortune (April 14) | VentureBeat (April 14) | The Register (April 13) | Claude Code changelog | Hacker News: Claude uptime thread